According to the complaint, which was obtained by AFP, the council is suing the French branches of the two tech giants for "broadcasting a message with violent content abetting terrorism, or of a nature likely to seriously violate human dignity and liable to be seen by a minor."

Such transgressions in France can result in an $85,000 fine and up to three years' imprisonment for those found guilty, multiple reports state.

Tech companies have been heavily criticized for allowing the gruesome attack to be streamed on Facebook and then shared on YouTube and Twitter. Facebook says the first user report on the original video came in 29 minutes after the broadcast began; the clip was taken down shortly after that — exactly when isn't clear. However, that did not prevent the video from being shared widely on YouTube and Twitter.

According to a March 20 press release by Facebook, the attacker's video was removed from the platform "within minutes of New Zealand police's outreach." The tech giant also added that the video was "viewed fewer than 200 times during the live broadcast" and that "no users reported the video" while it was being live streamed. The video was viewed about 4,000 times before it was removed from Facebook, and a link to a copy of the video was shared by a user on the message board 8chan, the press release adds.

"In the first 24 hours, we removed more than 1.2 million videos of the attack at upload, which were therefore prevented from being seen on our services. Approximately 300,000 additional copies were removed after they were posted," Facebook added in its press release.

Facebook's artificial intelligence (AI) systems were unable to detect the video of the attack quickly because the video did not trigger the company's automatic detection systems.

"AI systems are based on ‘training data,' which means you need many thousands of examples of content in order to train a system that can detect certain types of text, imagery or video. This approach has worked very well for areas such as nudity, terrorist propaganda and also graphic violence where there is a large number of examples we can use to train our systems. However, this particular video did not trigger our automatic detection systems," Facebook explained in its post, also adding that for AI automatic detection algorithms to work properly, the systems need to have "large volumes of data" that are of a "specific kind of content."

Last Tuesday, Australia's three major telecommunications companies — Spark, Vodafone NZ and 2degrees — sent an open letter to the CEOs of Facebook, Twitter and Google.

"We call on Facebook, Twitter and Google, whose platforms carry so much content, to be a part of an urgent discussion at an industry and New Zealand Government level on an enduring solution to this issue," the letter states.

"Although we recognize the speed with which social network companies sought to remove Friday's video once they were made aware of it, this was still a response to material that was rapidly spreading globally and should never have been made available online."

"We believe society has the right to expect companies such as yours to take more responsibility for the content on their platforms… For the most serious types of content, such as terrorist content, more onerous requirements should apply, such as proposed in Europe, including take down within a specified period, proactive measures and fines for failure to do so. Consumers have the right to be protected whether using services funded by money or data," the letter adds.

Last week, US House of Representatives Homeland Security Committee Chair Bennie Thompson also asked tech leaders to explain the viral spread of the attack on their platforms, highlighting what he referred to be a disparity between how the platforms remove terrorist content connected to ISIS and al-Qaeda compared to content by "other violent extremists, including far-right violent extremists."

"Your companies must prioritize responding to these toxic and violent ideologies with resources and attention," Thompson added in his letter. "If you are unwilling to do so, Congress must consider policies to ensure that terrorist content is not distributed on your platforms — including by studying the examples being set by other countries."

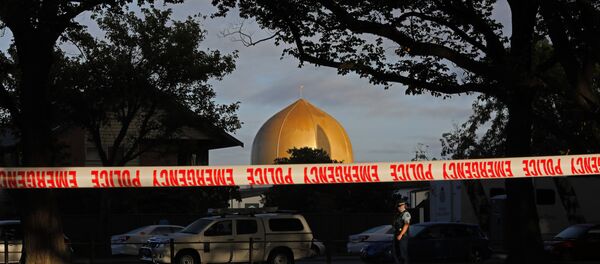

The shooting attack on two mosques stunned Christchurch, New Zealand, on March 15, leaving 50 dead and dozens injured. New Zealand Prime Minister Jacinda Ardern called it the country's "darkest day."

Australian right-wing extremist Brenton Tarrant confessed to the attack and was charged with murder soon after the massacre. On March 16, a New Zealand court ordered that he remain in custody until his next court appearance April 5.